Artificial Intelligence

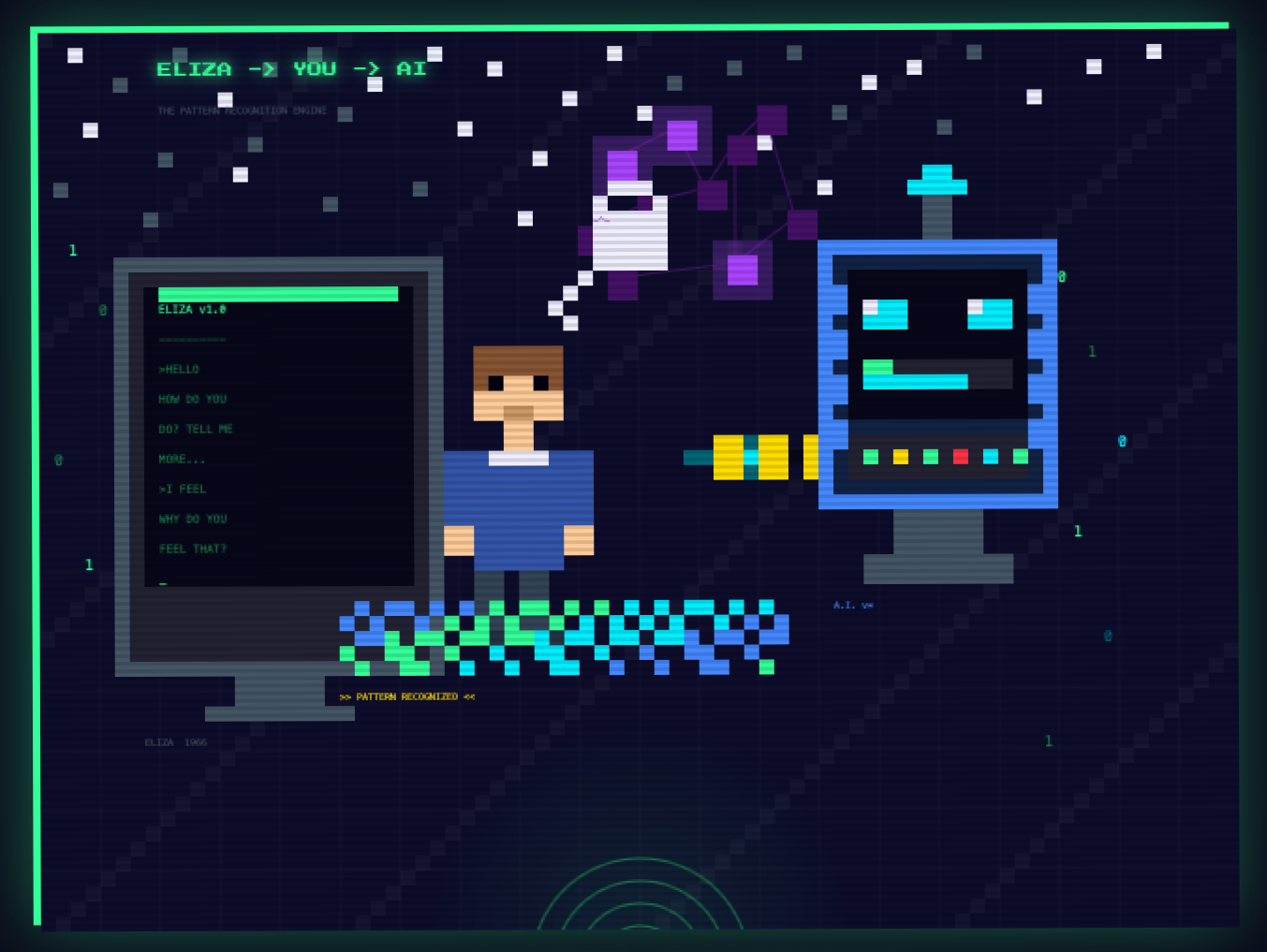

What Eliza Started: Human/Computer Communication

Since I have more free time than I’ve had in many years to explore my interests in more depth I’ve been looking at and experimenting with Artificial Intelligence. It’s here now and it’s not going anywhere. I’ve always enjoyed being on the forefront of technology, but this feels different. I remember playing with Eliza, the natural language processing computer program developed in the ‘60’s to explore what communication between humans and machines could look like. It was very basic and mostly just repeated your comments back as a question, psychotherapy style. It was pretty uncanny at first but it was very limited and we got bored with it pretty quickly but it sparked an interest, as I know it did for many people, on what the future of human/computer interaction would look like.

In my recent explorations with AI (I’ve used several different platforms for different tasks) I’ve had some somewhat uncanny conversations with the chatbots. I’ve asked them for feedback on my resume with some good results. I gave one full access to all of my notes (my digital brain - a journal, articles that inspire me, job search notes, recipes, etc.) and asked it to give me a summary of what kind of person it thinks I am and what values are reflected in my notes. It’s a little surreal to have a conversation with an AI bot about yourself but I feel like it has helped give me some insight into some things that I hadn’t thought about in that way before. For example, I feel like I have a good handle on my core values, but the recent conversation brought up some other things that I know were important to me, I just hadn’t elevated them to the level of a core value.

I think about this kind of stuff a lot; I’ve been reading about metacognition lately (defined by Wikipedia as “an awareness of one’s thought processes and an understanding of the patterns behind them.” That sounds right to me. I think a lot and I think about thinking a lot. Yes, it’s a rabbit hole. Hey, there was a reason I dove headfirst into Philosophy in college and ended up majoring in it. I like knowing what makes people tick but I really like knowing what makes me tick. I’ve always believed the moment we stop pushing ourselves to learn about the world and about ourselves, we may as well just quit. I’m always trying to find new ways to evolve and explore how I experience the world. The recent conversation I had with the chatbot about my notes was enlightening, frightening, and perhaps a little self absorbed but it gave me some interesting things to think about and explore further.

I feel like I should say something about my stance on AI. I think it can be a powerful tool when used correctly. It can also be very dangerous and make us lazy when relied on too heavily. I don’t think it should be used to replace human thought, but to supplement it. I’ve used it a lot in the past to get me started with writing a policy for work or a technical email, but I always rewrite it in my own voice. I like to use AI as a collaborative tool; not in place of my work or other people’s input and thoughts, but in addition to them. It’s also very good at some of the more tedious tasks like proofreading.

Back when I was experimenting with Eliza, I quickly realized that she couldn’t really know me. She simply reflected my own words back to me and made me interpret any meaning. What’s so eerie and striking about AI these days is that it can actually synthesize, find patterns, make connections and sometimes see things about you that you’ve been too close to notice. That’s powerful, and yes, a little unsettling. But I think that’s the point. The best tools don’t just make things easier; they make you think harder. Used that way, AI isn’t replacing human thought, it’s provoking it. And for someone who thinks about thinking for fun (and profit?), that feels like exactly the right kind of rabbit hole.